Surviving the Reset: A Framework for AI Competitive Advantage

What separates the companies that will survive the correction.

TL;DR

AI companies face unique valuation challenges. Traditional metrics fail to capture displacement risk, capability thresholds, and market creation dynamics specific to AI. This framework provides a structured approach to evaluate what creates and sustains value in AI companies.

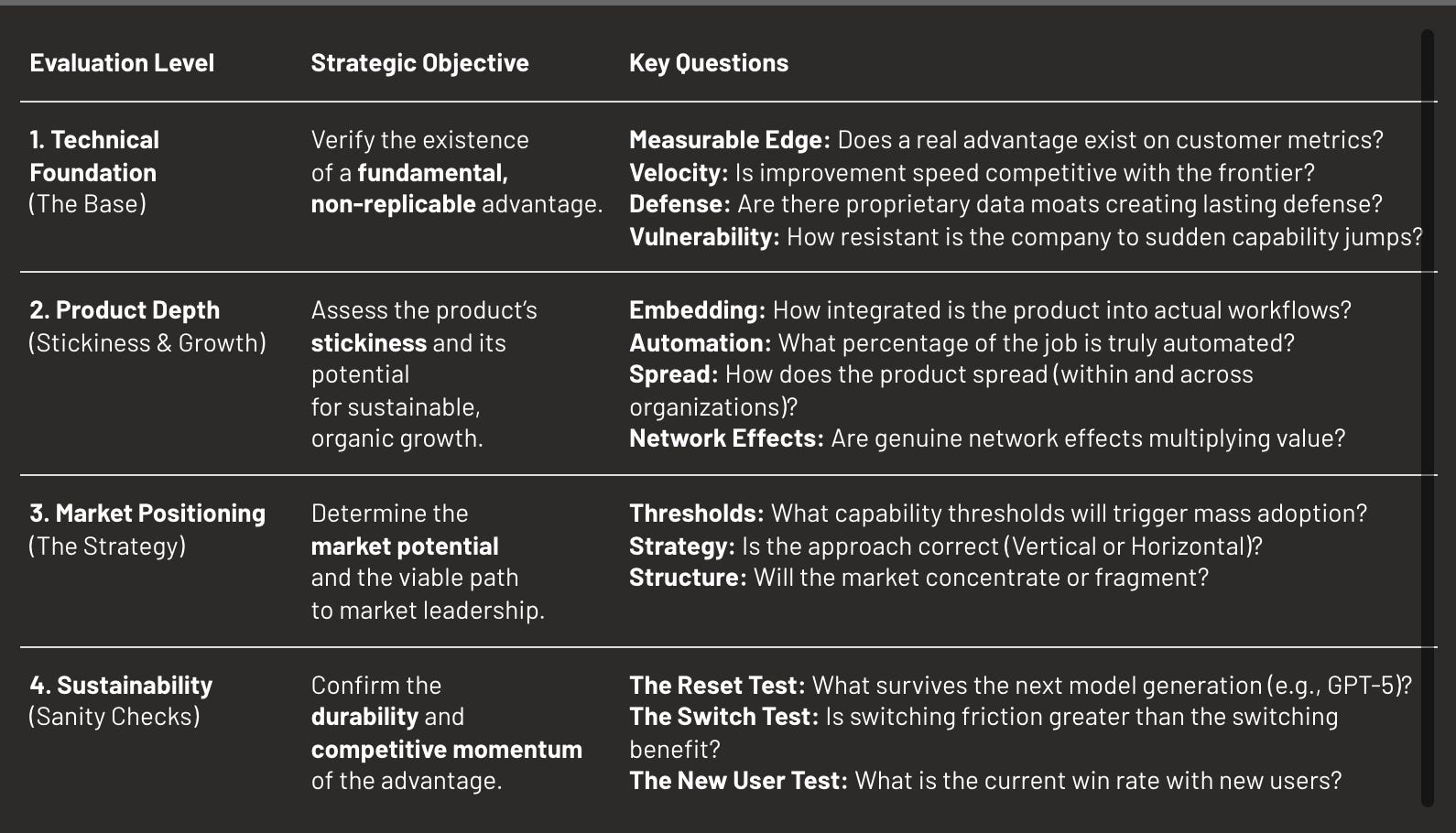

We propose a four-level hierarchy: Technical Foundation → Product Depth → Market Position → Sustainability. Each level builds on the previous one. Without technical edge, product integration fails. Without product depth, market position is fragile. Without all three, sustainability is impossible.

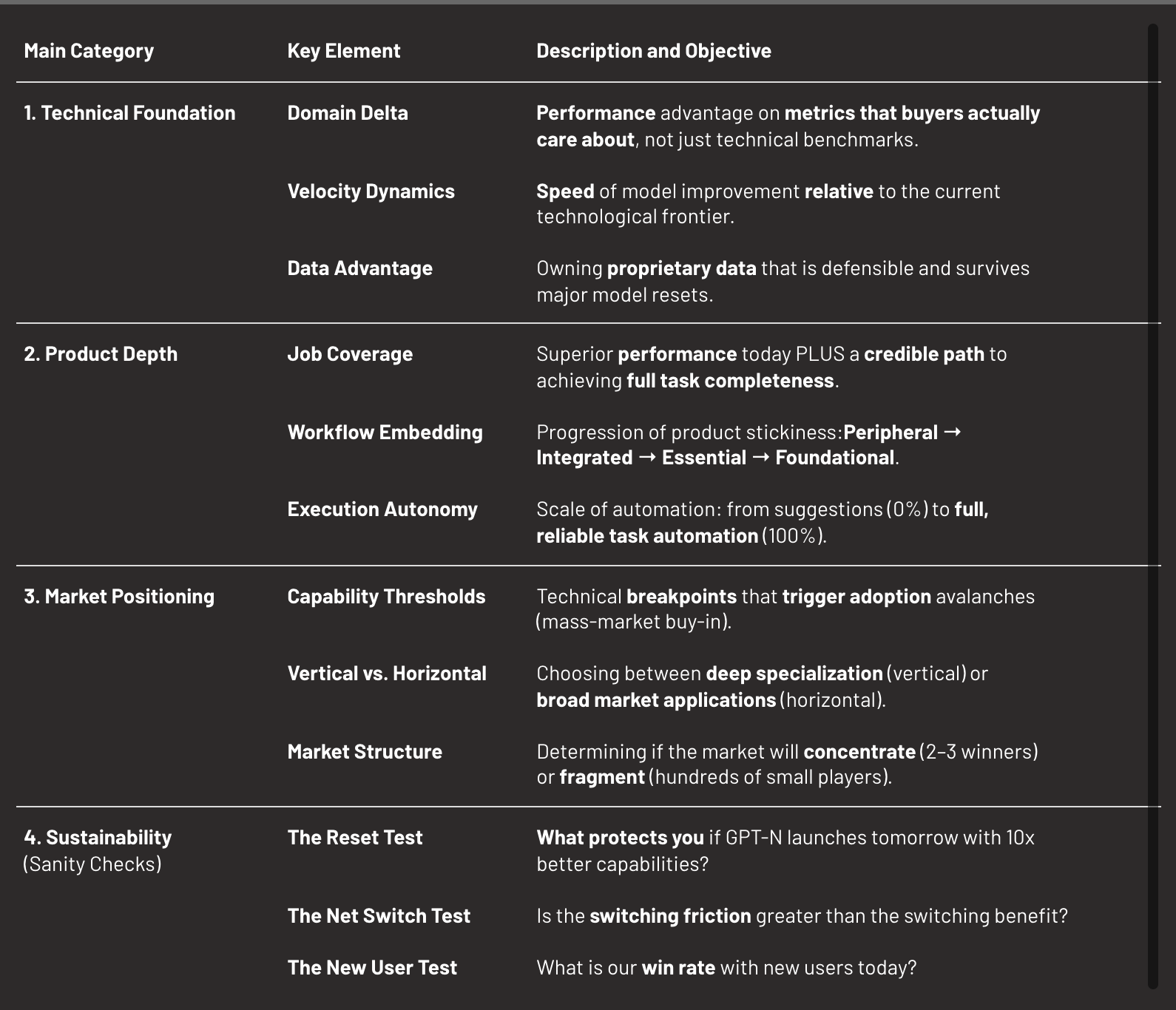

The Framework:

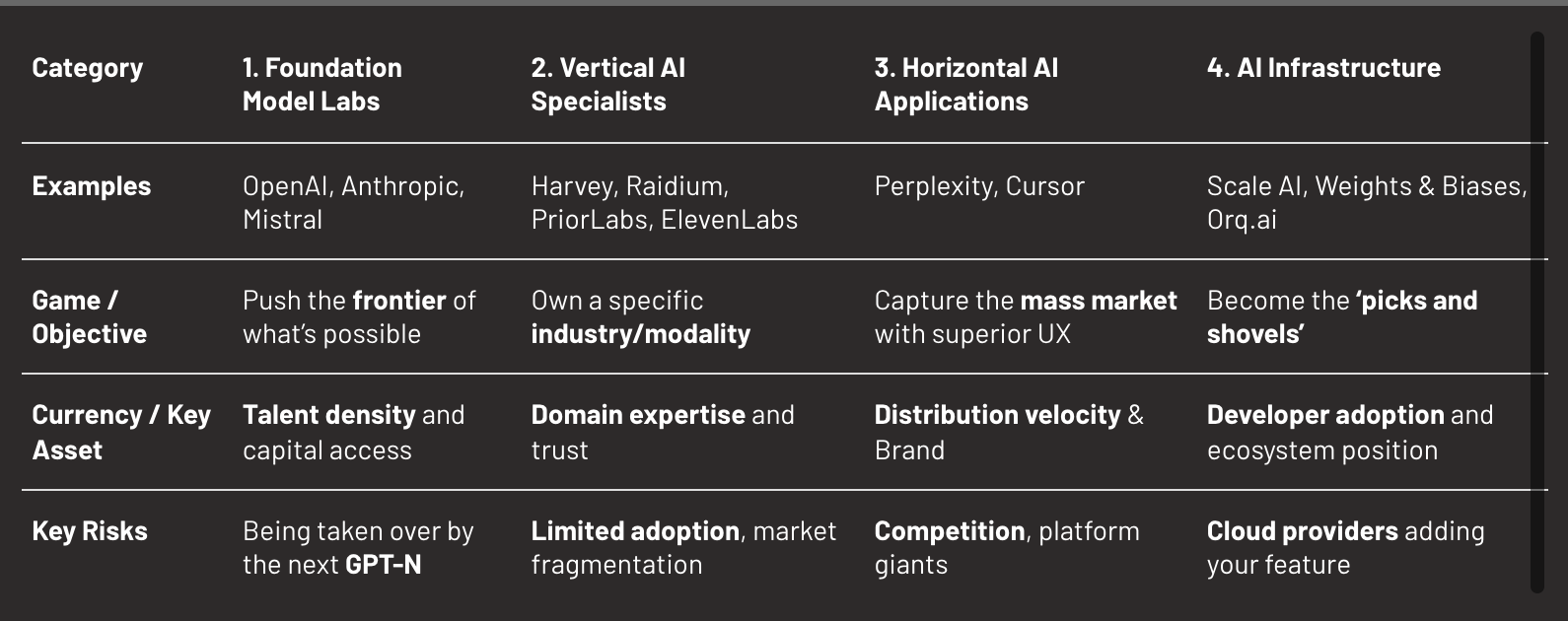

Not all AI companies play the same game. Four archetypes exist: General-Purpose Foundation Models race for frontier capabilities, Vertical Specialists own industries through trust, Horizontal Applications capture mass markets via distribution, and Infrastructure providers dominate developer ecosystems. The framework applies differently to each.

AI rewards compound advantages. Single moats erode quickly. Sustainable value requires layering multiple defences that reinforce each other. The game has just begun.

1. Introduction: Why AI Needs a Different Framework

1.1. The Problem with Traditional Approaches

SaaS valuation relies on predictable metrics: CAC/LTV, retention, growth rates. These assume stable markets, gradual innovation, and clear competitors. AI breaks these assumptions.

In AI, a new model release can make your product obsolete overnight. Markets don’t exist until capabilities create them. Competition comes simultaneously from startups, incumbents, and tech giants. Adoption happens in sudden jumps, not smooth curves.

Consider Jasper AI. In 2022, it was valued at $1.5B with strong metrics. Six months later, ChatGPT made its core offering nearly worthless. Traditional frameworks couldn’t predict this. The product didn’t gradually erode; it became irrelevant overnight when OpenAI crossed a capability threshold.

1.2. The Unique Dynamics of AI

Two characteristics fundamentally distinguish AI from traditional software markets:

- Displacement Risk: Unlike SaaS where features erode gradually, AI companies face overnight obsolescence from capability jumps. Stability AI lost its edge when Midjourney and DALL·E 3 leapfrogged it in quality. Dozens of GPT-3 copywriting startups vanished when GPT-4 made their templates irrelevant.

- Capability-Driven Market Creation: Traditional analysis assumes markets exist, but demand is endogenous in technology. In AI, capabilities create markets from nothing. Before November 2022, nobody budgeted for “AI assistants” because ChatGPT hadn’t created the category yet. Before ElevenLabs crossed the human-voice threshold, “AI dubbing” wasn’t a line item.

1.3. The Four Games in AI

Not all AI companies face the same dynamics. Four main distinct archetypes exist, each with different rules:

- General-Purpose Foundation Model Labs (OpenAI, Anthropic, Mistral)

- Vertical AI Specialists (Harvey, Jimini, Raidium, PriorLabs, Elevenlabs)

- Horizontal AI Applications (Perplexity, Cursor)

- AI Infrastructure (Scale AI, Weights & Biases, Orq.ai)

1.4. Our Approach

This framework addresses these dynamics through four hierarchical levels:

- Technical Foundation: What unique capabilities exist and can they survive resets?

- Product Depth: How do capabilities become indispensable in workflows?

- Market Position: What market dynamics favour or threaten the position?

- Sustainability: Will the advantages compound or erode over time?

Each level depends on the previous ones. Technical edge without product integration remains academic. Product without market understanding is blind. All three without sustainability tests is wishful thinking.

2. [Part I] Technical Foundation

Technical advantage in AI differs fundamentally from traditional software. It’s not about having more features or better UI. It’s about performance gaps that survive resets, improvement velocity that outpaces alternatives, and data advantages that compound over time. These three elements — (i) Domain Delta, (ii) Velocity Dynamics, and (iii) Effective Data Advantage — form the technical foundation.

2.1 Domain Performance and Evolution

2.1.1 Domain Delta (ΔD)

Definition: Domain Delta measures the performance advantage on metrics buyers actually care about, tested under real-world production constraints.

Why it matters: Customers care about performance on their specific tasks under their specific constraints. A model that’s 99% accurate but takes 30 seconds is worthless for real-time applications. Academic papers with state-of-the-art results are promising signals, but they’re not sufficient for commercial success. The performance gap is especially important as the business use case criticality increases.

What it measures: The gap between your performance and the next-best alternative, specifically on customer-relevant metrics under production constraints like latency, cost, and compliance requirements.

Drivers: task-specific optimisation that goes beyond general models, training that accounts for real-world constraints, domain expertise encoded into the system, and rigorous testing in production environments rather than lab conditions.

Archetype variations:

- General-Purpose Foundation Models: Must match frontier capability pace (3-4 month major releases) or become obsolete

- Vertical Specialists: Can improve models slower if domain advantage is strong enough

- Applications: Decouple product velocity from model velocity; they can use same model for months while shipping weekly UX/feature improvements

- Infrastructure: Tool performance improvements decoupled as well

Failure mode: Without meaningful Domain Delta, customers default to cheaper, more accessible general models.

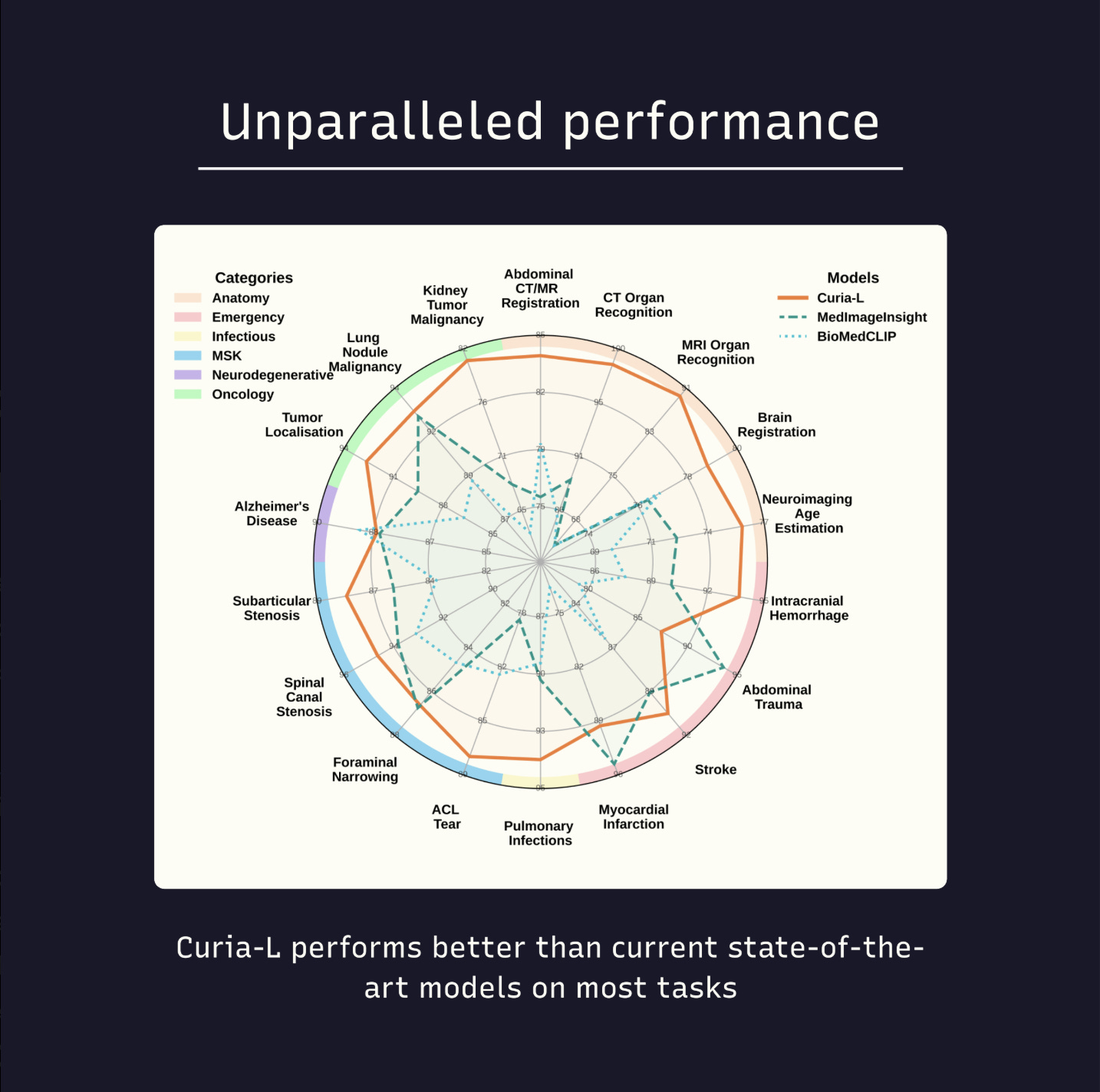

Example: Raidium’s Curia model in radiology. Its published benchmarks show state-of-the-art performance in detecting pathologies across multiple imaging modalities. Crucially, Curia demonstrates these gains while meeting clinical constraints on inference cost and latency — the criteria that matter to hospitals and radiologists.

Benchmark for Curia vs frontier/open models on buyer-relevant tasks (latency/cost annotated) — source

2.1.2 Velocity Dynamics

Definition: The rate of improvement relative to the frontier and direct competitors.

Why it matters: In AI, static advantage erodes quickly. What determines survival isn’t your current position but whether you’re improving faster than alternatives.

Velocity requirements by archetype:

- General-Purpose Foundation Models: Must match frontier pace (3-4 month cycles) or become irrelevant

- Vertical Specialists: Can be slower (6-12 months) if deeply embedded

- Applications: Need weekly/monthly updates to maintain user engagement

- Infrastructure: Quarterly releases acceptable if APIs remain stable

Examples:

- Perplexity improves daily, with constant iteration based on user behaviour and feedback

- Orq.ai enables its customers to achieve 2-3x faster improvement cycles through better evaluation infrastructure

Failure mode: Companies with slow improvement cycles face gradual then sudden irrelevance as competitors pull ahead.

2.1.3 Effective Data Advantage (EDA)

Definition: The quality, uniqueness, and defensibility of data pipelines that create lasting competitive advantage.

Why it matters: Models commoditise quickly as techniques spread and compute becomes accessible. Data remains one of the few sustainable moats in AI.

Critical components include: exclusive access through contracts, partnerships, or privileged channels; quality pipelines with superior annotation, curation, and verification systems; refresh mechanisms that continuously collect new data; synthetic leverage; and robust data supply chains that avoid legal exposure.

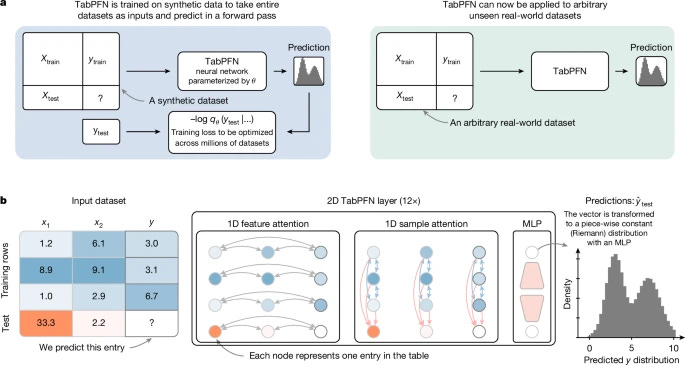

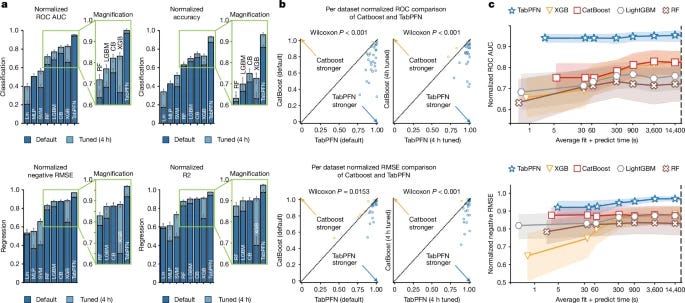

Example: PriorLabs’ TabPFN uses millions of synthetic datasets, generated via structural causal models, to pre-train its foundation model over diverse tabular prediction tasks. This gives enormous SLF: it achieves state-of-the-art performance on small datasets with minimal training.

TabPFN’s pre-training on millions of synthetic datasets (above) + performance vs tuned baselines (below). Highlights how synthetic SLF allows strong ΔD even in small datasets.

Failure mode: Dependence on public data means your advantage erodes as soon as competitors or open-source models train on the same sources.

2.2 Displacement Vulnerability

Every AI company faces existential threats from two directions: sudden capability jumps that reset the entire playing field, and platform giants that could absorb entire categories.

2.2.1 Capability Displacement

Definition: The risk that a technical breakthrough makes your entire value proposition obsolete.

Why it matters: AI doesn’t evolve gradually. Each major model release can eliminate entire categories of companies overnight. GPT-3.5 to ChatGPT wasn’t an incremental update, it was a category killer that destroyed thousands of startups.

To assess vulnerability, ask two critical questions:

- What technical advance would eliminate my advantage?

- What would remain valuable after that advance occurs?

Valid defences against displacement include: proprietary data that new models cannot access, deep workflow integrations, trust and relationships that transcend technical capabilities, and regulatory moats that take years for competitors to cross.

Examples:

- Jasper AI built its business on GPT-3’s need for templates and structured prompts. When ChatGPT could handle freeform requests, Jasper’s entire value proposition evaporated — after having raised $100M and reached $90M in ARR.

- Character.ai had unique memory systems that differentiated it. When GPT-4 arrived with vastly larger context windows, that differentiation largely disappeared.

- Tome built AI-generated slide decks and reached a $300M valuation with zero revenue. When Microsoft integrated similar generative capabilities directly into PowerPoint, Tome’s differentiation vanished.

2.2.2 AI Giants Threat

Definition: The risk that giants like OpenAI, Anthropic, Google, Microsoft, or Meta enter your space and absorb your market.

Assessment framework:

- Market size threshold: Giants need billion-user opportunities to justify investment.

- If your Total Addressable Market is under $10B, you’re likely safe from direct competition. Focus on building a niche monopoly.

- Between $10-100B, you might become an acquisition target. Competition will come mostly from other startups.

- Above $100B, expect direct competition. Build a unique, durable competitive moat.

- Platform dependency exposure: APIs, distribution channels, and data feeds. When platforms change pricing or policies, dependent startups often die overnight.

- Acquisition dynamics: Is the company more valuable to them as an independent company or absorbed into their ecosystem?

Risk by archetype:

- General-purpose foundation models: Direct competition likely

- Vertical Specialists: Acquisition more likely than competition

- Applications: High platform risk if in their path

- Infrastructure: Medium risk, often become acquisition targets

3. [Part II] Product Depth

Technical advantage without product depth is just an impressive demo. The most sophisticated AI means nothing if users don’t integrate it into their daily work.

3.1 Workflow Integration

3.1.1 Job Coverage Excellence (JCE)

Definition: The combination of superior performance on critical tasks today AND a credible path to complete job coverage tomorrow.

This JCE equation has two core components:

- Current Strategic Advantage: Does AI solve a few tasks that create 80% of value better than incumbents? Think: most time-consuming and repetitive work, high error-prone processes, bottlenecks limiting growth.

- Expansion Credibility: Will you cover the entire feature set desired by clients? Buyers evaluate architecture flexibility, development velocity, vision alignment, and resources in hand.

Examples:

- Vanta began with SOC 2 compliance but credibly promised to become the entire security and compliance platform

- Ramp began with expense management (20% of CFO stack) but credibly expanding to full finance suite

Failure mode: Trying to match incumbents feature-for-feature from day one, diluting focus on strategic superiority.

3.1.2 Workflow Embedding (WE)

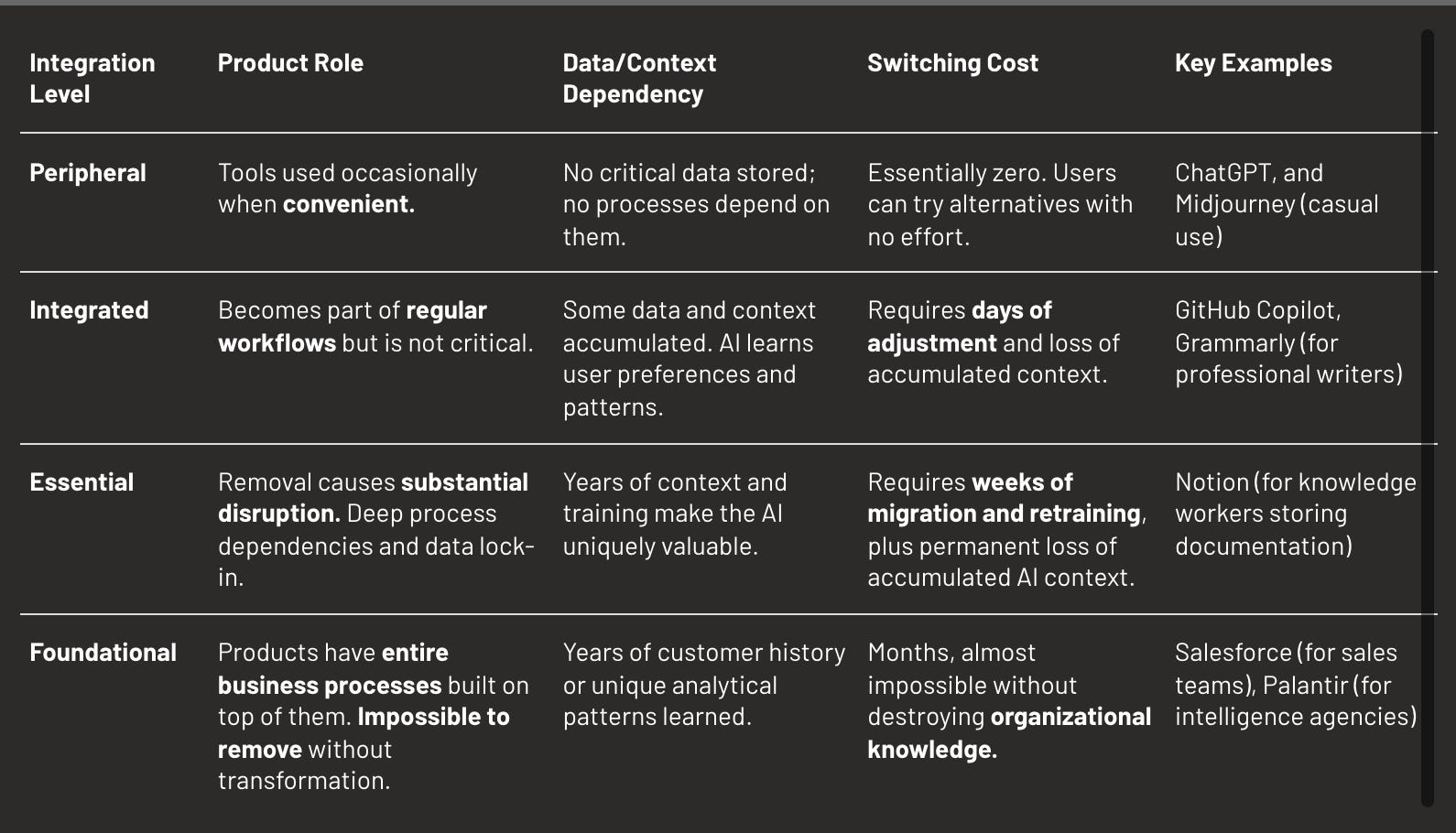

Definition: The degree to which a product becomes embedded in critical business workflows and processes.

Products exist on a spectrum of embedding:

- Peripheral: Tools are used occasionally when convenient.

- Integrated: Products become part of regular workflows but aren’t critical.

- Essential: Systems would cause significant disruption if removed.

- Foundational: Entire business processes built on top of them.

Embedding patterns by archetype:

- General-purpose Foundation Models: Often stay peripheral (API calls)

- Vertical Specialists: Must reach “Essential” or die

- Applications: Success requires “Integrated” minimum

- Infrastructure: “Foundational” is the only winning position

Example: Darktrace in cybersecurity doesn’t just monitor, it integrates with Active Directory, cloud platforms, and network infrastructure, learning normal behaviour patterns unique to each organisation over months.

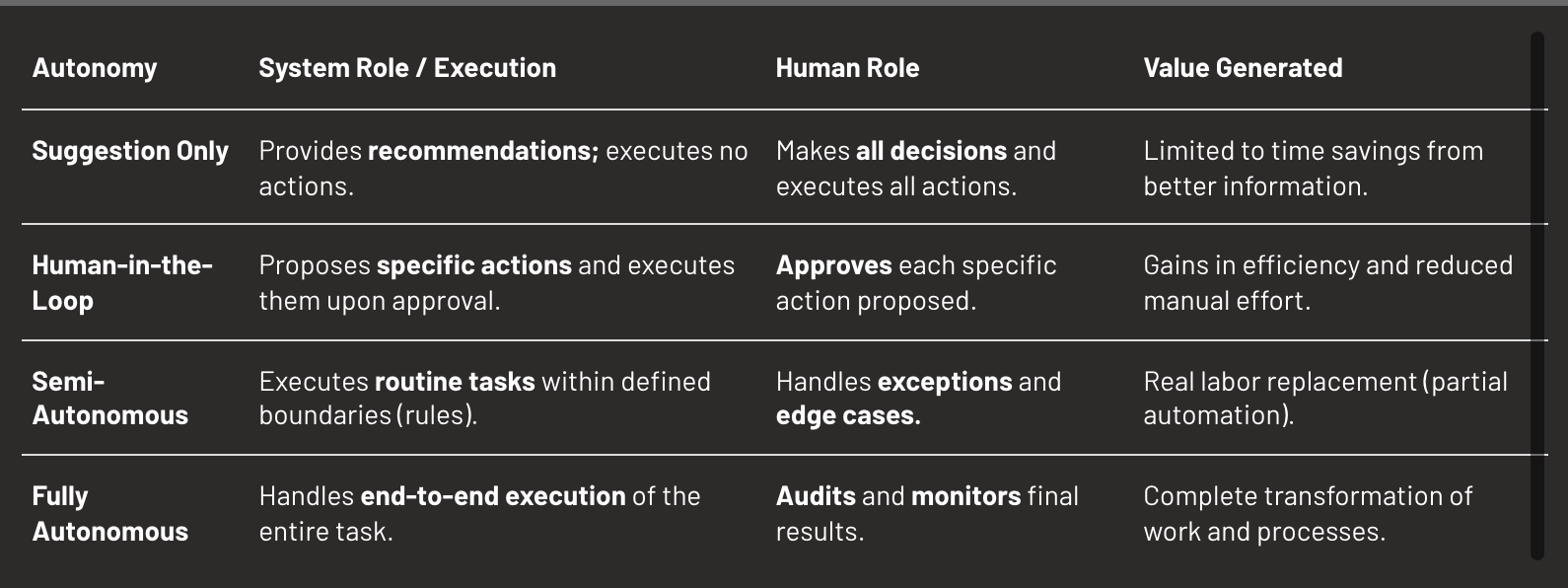

3.1.3 Execution Autonomy (ACS)

Definition: The percentage of a job that the system executes autonomously without human intervention.

The autonomy spectrum progresses through distinct levels: suggestion only, human-in-the-loop, semi-autonomous and fully autonomous.

Moving up this spectrum requires meeting increased trust, based on objective and perceived reliability: accuracy thresholds, regulatory approval where required, insurance and liability coverage, and cultural acceptance of AI decision-making.

Example: Relief AI moves beyond recommendations by generating advanced invoices, rooted on the users’ knowledge base.

3.2 Distribution and Network Effects

3.2.1 Distribution Power

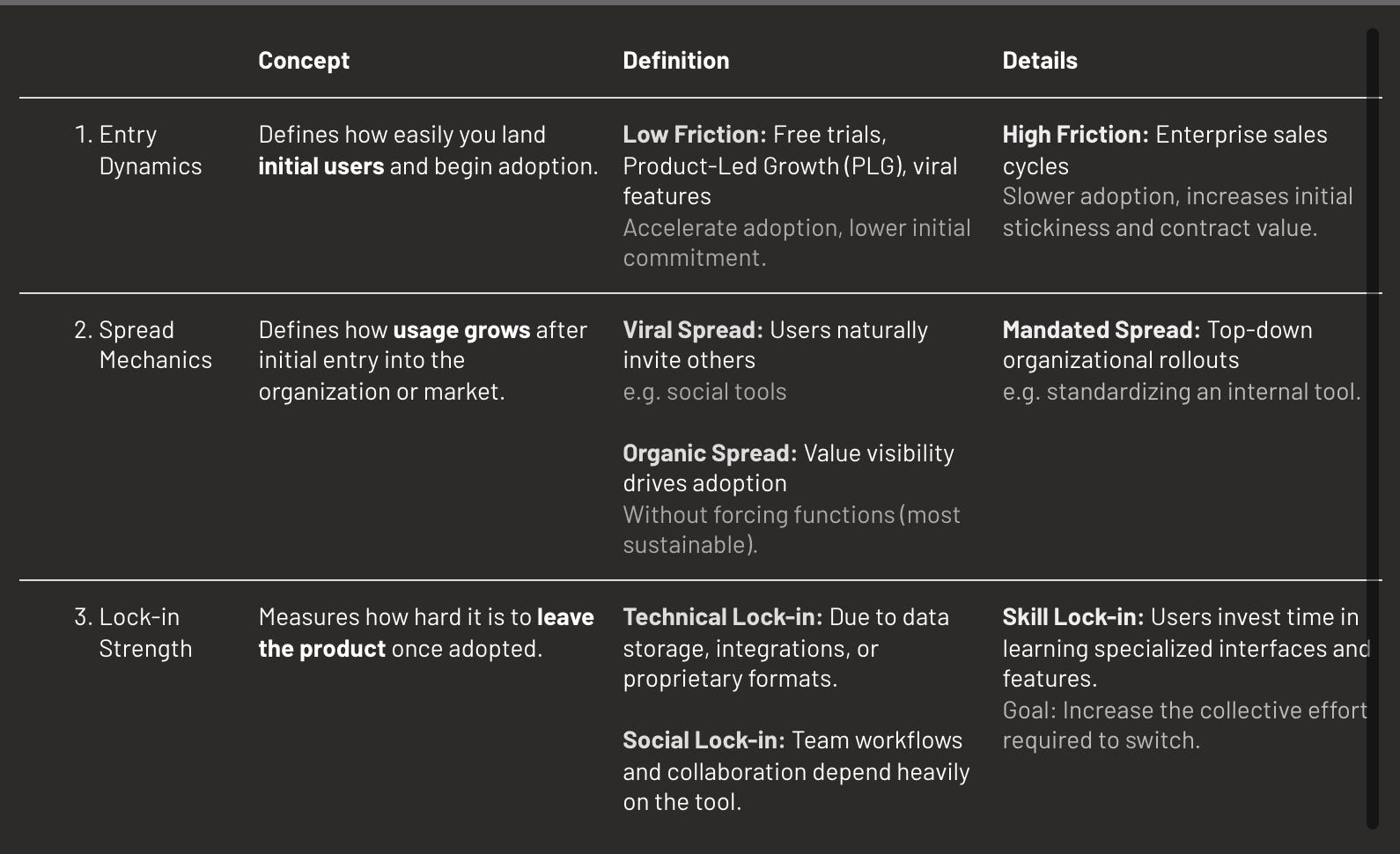

Definition: The efficiency and defensibility of how a product spreads within and across organisations.

Three dynamics determine distribution success: entry, spread & lock-in.

Examples of strong distribution:

- Cursor enters through individual developers trying it solo, spreads when teams see productivity gains, then locks in through shared workspace configurations.

- Contrast lands rapidly because it is available in HubSpot’s marketplace, bypassing long procurement cycles.

3.2.2 Network Effects in AI

Definition: Value multiplication effects where each additional user, data point, or integration makes the product more valuable for all participants.

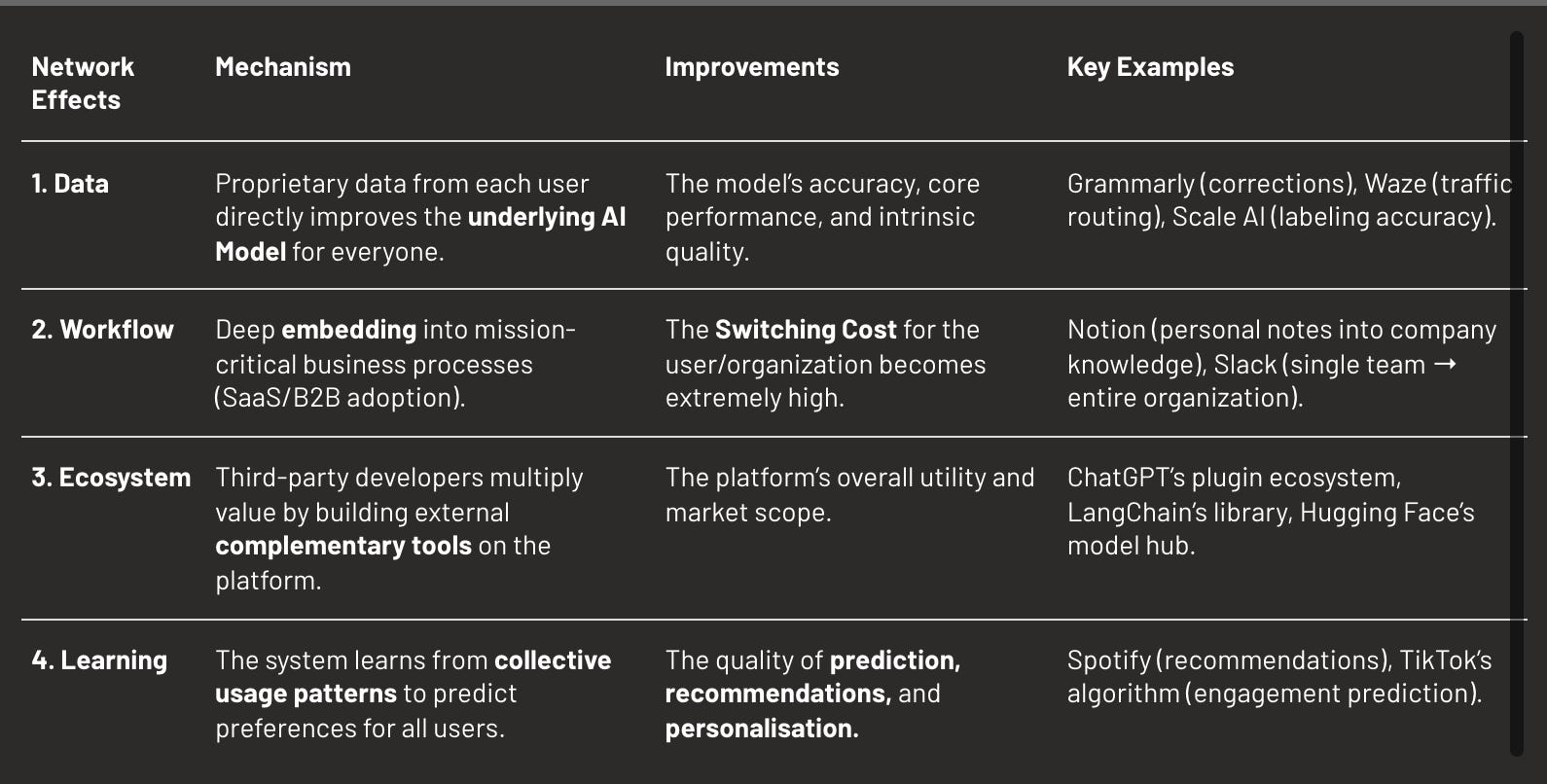

AI creates unique types of network effects (NFX): data, workflow, ecosystem and learning.

Network effect potential by archetype:

- General-purpose Foundation Models: Strong data network effects from RLHF

- Vertical Specialists: Limited to workflow network effects within industry

- Applications: Full spectrum possible (data, viral, ecosystem)

- Infrastructure: Developer ecosystem effects critical

Example: Hugging Face’s model hub creates all four network effects: Data (500k+ models improve through community feedback), Workflow (integration in one project drives adoption in others), Ecosystem (15,000+ contributors create value), Learning (system learns optimal model recommendations). Result: 10M+ users, $4.5B valuation despite no proprietary models.

4. [Part III] Market Positioning & Competitive Dynamics

4.1 Market Creation

4.1.1 Capability Thresholds

Definition: Technical break points that trigger mass market adoption.

Three types of thresholds trigger adoption:

- Performance thresholds activate when accuracy crosses 95% for medical diagnosis, latency drops below 100ms for real-time applications, or reliability exceeds 99.9% for production systems.

- Cost thresholds unlock markets when AI becomes 10x cheaper than alternatives, when cost drops below value created making ROI obvious, or when marginal cost approaches zero.

- Ease thresholds democratise adoption when no training is required, natural language interfaces eliminate learning curves, or single-click deployment removes technical barriers.

Examples:

- Voice AI existed for decades as robotic text-to-speech. When ElevenLabs crossed the “indistinguishable from human” threshold in 2022, the market exploded from millions to hundreds of millions in 18 months.

- Code completion was a novelty for years. When GitHub Copilot crossed the “actually helpful more than annoying” threshold in 2021, it transformed software development.

- Image generation was an academic curiosity. When Midjourney crossed “professionally usable” quality in 2022, it disrupted the creative industry.

4.1.2 Adoption Patterns

Definition: How markets adopt AI solutions based on their tolerance for imperfection and need for reliability.

Markets fall into distinct categories:

- “Good Enough” Markets that accept 80% accuracy because errors are tolerable, quality is subjective, or the use case is creative rather than critical. These markets adopt quickly through viral consumer spread. Content creation, design tools, and research assistants thrive here. The strategy is to move fast and prioritise reach over perfection.

- “Excellence Required” Markets that demand 99%+ accuracy because errors have serious consequences. These markets adopt slowly through careful enterprise evaluation. Healthcare, finance, and legal applications face this bar.

4.2 Strategic Positioning

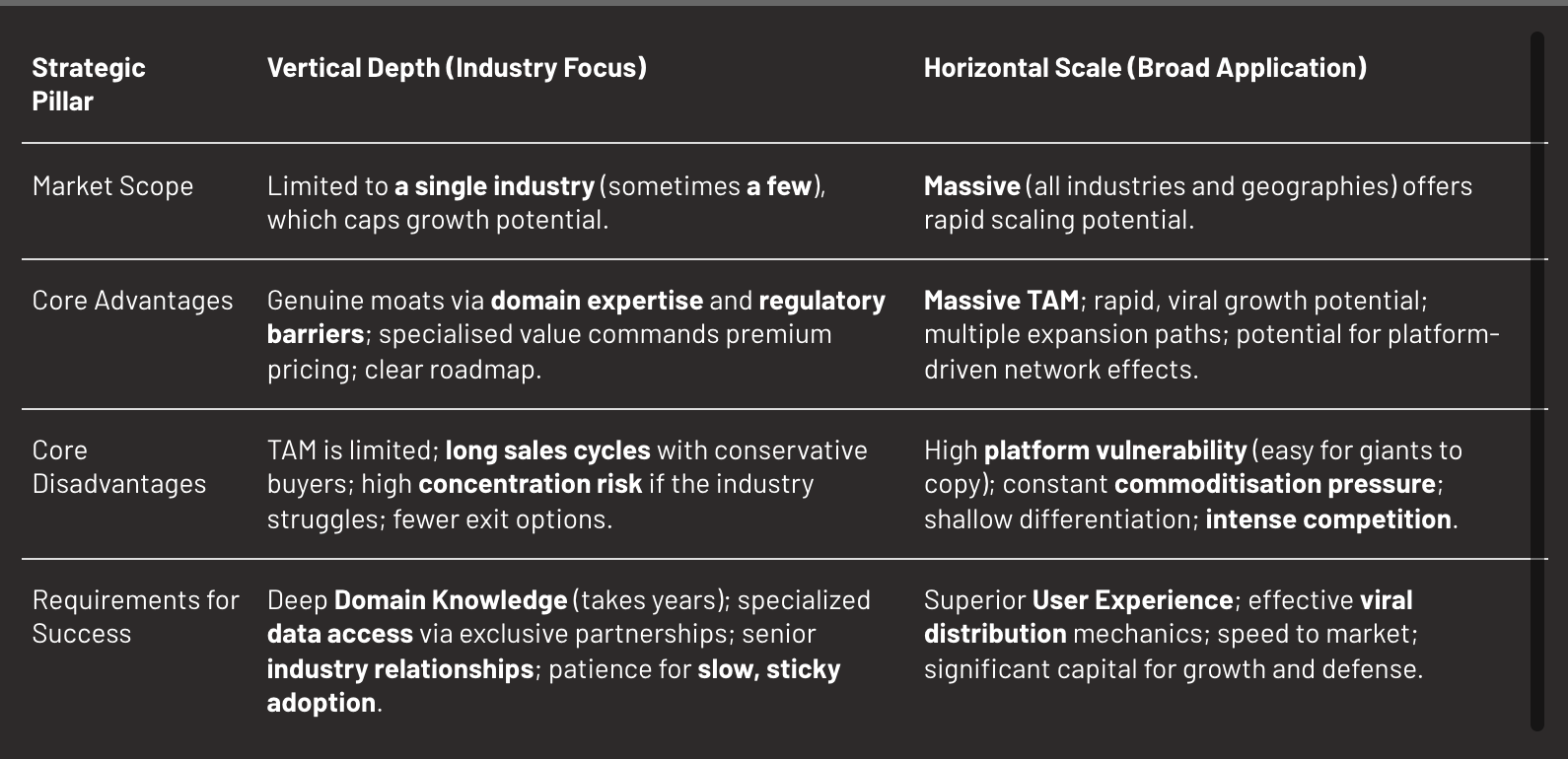

4.2.1 Vertical vs Horizontal Strategy

Definition: The fundamental choice between depth in one domain versus breadth across many.

Note: This choice is largely predetermined by archetype. General-purpose Foundation Models and Applications typically go horizontal. Specialists are vertical by definition. Infrastructure can go either way: Weights & Biases (horizontal) vs Ironclad (vertical for legal).

It’s also worth noting that there are nuances between horizontal and vertical. Horizontal players tend to build adapted to certain industries. Vertical players tend also to expand not only geographically but in adjacent markets.

4.2.2 Market Structure Evolution

Definition: How markets naturally evolve toward concentration or fragmentation based on underlying economics and network effects.

Key parameters predicting concentration: Network Effects Strength, Data Flywheel Dynamics, Switching Costs Magnitude, Capital Requirements, Standardisation Potential, Regulatory Complexity, Economies of Scale.

Examples:

- General-purpose foundation models: Concentrated — Strong network effects + data flywheel + billions in capital + massive economies of scale = 2-3 winners (OpenAI, Anthropic, Google)

- Voice synthesis: Concentrated — Data flywheel from usage + brand effects + quality threshold = concentrating around ElevenLabs

- Vertical AI applications: Fragmented — Low standardisation + domain expertise requirements + modest capital + rapid innovation = hundreds of specialists

- AI marketing tools: Fragmented — Diverse use cases + low switching costs + rapid innovation + minimal scale advantages = thousands of niches

5. [Part IV] Sustainability

Building advantage is difficult. Maintaining it is harder. AI’s rapid evolution means every position faces constant erosion. Three sanity checks validate whether advantages will survive.

5.1 The Three Sanity Checks

5.1.1 The Reset Test

Question: “If GPT-5, Claude-4, or Gemini-2 launches tomorrow with 10x better capabilities, what protects you?”

Why it matters: Major capability resets happen every 12-18 months. Companies that survive these resets have answers beyond “we’re better today.”

Valid protection against resets includes:

- Proprietary data moats with unique datasets that new models cannot access or replicate

- Infrastructure ownership where specialised systems create barriers competitors cannot quickly replicate

- Workflow lock-in so deep that model improvements alone don’t change buying decisions

- Regulatory barriers like certifications and compliance that take years to achieve

- Trust capital in the form of relationships and reputation that transcend technical capabilities

- Network effects where value multiplies with users regardless of underlying model quality

Invalid protection: “we’re ahead today,” “we have more features,” “we know the industry,” “we have funding.”

5.1.2 The Net Switch Test

Formula: Switching Friction / Switching Benefit = Net Switching Barrier

Where:

- Friction = time + effort + risk of switching

- Benefit = performance gain × cost savings × urgency to switch

Why it matters: High friction alone doesn’t create moats if the benefit of switching is even higher. The ratio between friction and benefit determines true stickiness.

5.1.3 The New User Test

Question: “Of 10 new users entering the market today, how many choose you?”

Why it matters: New users have no switching costs. They choose based purely on immediate benefits and anticipated viability. High retention with low new-user acquisition indicates a dying product surviving on lock-in.

5.2 Dependencies and Trade-offs

Success requires alignment across all levels of the framework:

- Technical edge without product depth equals impressive demos that don’t stick. Many AI startups have genuinely superior models, but fail to embed in workflows.

- Product depth without continuous improvement leads to gradual erosion. High friction only buys time — if you don’t use that time to improve, you’ll still lose.

- Vertical depth without true specialisation delivers the worst of both worlds. Some companies claim vertical focus but offer generic solutions with minimal customisation.

- Horizontal scale without differentiation guarantees a race to the bottom. Competing broadly with shallow value propositions invites platform crushing and price wars.

6. Critical Factors Beyond Core Framework

6.1 Model Economics

While not the primary focus of this article, economics ultimately determine viability:

- Inference Efficiency: value-to-cost ratio should exceed 10x.

- Utilisation Rate: profitability typically requires exceeding 70% utilisation.

- Model Mix Optimisation: routing 80% of queries to cheaper models while reserving expensive frontier models for the 20% that need them.

- Burn Multiple: below 1x is excellent, 1-2x is good, above 3x raises concerns.

- Margin Trajectory: margins should improve with scale through optimisation, volume discounts, and operational leverage.

6.2 External Constraints

- Infrastructure limitations: GPU availability determines training capacity. Power access limits data center expansion. European AI labs face 12-month waits for power upgrades.

- Regulatory requirements: Healthcare and finance require years of certification. Raidium spent 18 months on medical device approval before generating meaningful revenue.

- Capital access: Training runs cost millions. Compute bills compound monthly. Mistral raised $500M within its first year just to stay competitive.

- Talent scarcity: ML engineers command $500K-$1M+ packages in the US. Anthropic acquired Humanloop for their talents.

6.3 Trust as Universal Gate

Trust multiplies or nullifies everything else.

- Technical trust requires reliability exceeding 99.9% uptime, consistency in outputs, transparency in decision-making, and graceful failure handling.

- Institutional trust demands certifications like ISO, SOC 2, and HIPAA, insurance coverage, and compliance with regulations like GDPR.

- Social trust builds through brand reputation, customer references, and media coverage. Anthropic’s safety focus attracts enterprise customers who might otherwise hesitate.

Trust dynamics follow predictable patterns: building slowly over months or years, collapsing quickly from single incidents, and transferring poorly through acquisitions.

7. Synthesis: Applying the Framework

7.1 How to Evaluate an AI Company

Evaluation requires systematic progression through the hierarchy: start with the technical foundation → evaluate product depth → understand market positioning → apply the sanity checks.

7.2 Key Insights

Displacement is the default state in AI. Every company faces obsolescence from capability jumps and platform threats. Building defences is existential.

Thresholds trigger avalanches. Markets stay flat for years then explode when capabilities cross critical points. Timing matters more than gradual improvement.

Depth beats breadth initially. It’s better to be irreplaceable for 100 customers than nice-to-have for 10,000.

Trust gates value creation. Technical capability without trust remains in the lab. One trust violation can destroy years of progress.

Compound advantages win. Single moats erode quickly in AI. Sustainable advantage requires layering multiple defences that reinforce each other. Data plus workflow plus network effects plus trust equals durability.

7.3 Framework Limitations

This framework cannot predict breakthrough innovations. GPT-scale surprises will continue happening. It cannot time markets precisely — thresholds are visible approaching but not predictable far in advance. It cannot eliminate uncertainty since AI evolves too rapidly for complete confidence.

Use the framework as structured thinking, not formulaic answers.

Conclusion

AI valuation requires new mental models. Traditional metrics assume stability that doesn’t exist. This framework addresses AI-specific dynamics: displacement risk, capability thresholds, market creation, and compound advantages.

The four-level hierarchy — technical, product, market, sustainability — provides structure for analysis. The three sanity checks provide validation of defensibility. Together they reveal what creates and sustains value in AI companies.

The framework will evolve as AI evolves. New categories will emerge. New dynamics will become important. But the core questions remain constant: What advantage exists? How does it become indispensable? What market dynamics apply? Will it survive resets?

The age of AI rewards those who build defensible positions. This framework tries to identify real advantages, evaluate true moats, and invest in durable value.

The game has just begun.

Article originally posted on WeLoveSota.com

Originally published on Libido Sciendi — 14 Oct 2025